What Are the Most Common Causes of Equivocal Results?

- Over-optimistic power calculation

- using an effect size > clinically relevant

- if effect is "only" clinically relevant, likely to miss it

- Fixed sample size

- Losing power in the belief that α is something that should be controlled when taking multiple data looks

- Insensitive outcome measure

Another Common Outcome

- Little evidence the treatment works

- Usually the study could have been stopped much sooner

- Q: How far along can a trial be and still have a good chance for a positive study if difference is negative?

- A:

The Problem With Sample Size

- Frequentist (traditional statistics): need a final sample size to know when α is "spent"

- Fixed budgeting also requires a maximum sample size

- Sample size calculations are voodoo

- arbitrary α, β, effect to detect Δ

- requires accurate σ or event incidence

- Physics approach: experiment until you have the answer

Is a Sample Size Calculation Needed?

- No, if using a Bayesian sequential design

- With Bayes, study extension is trivial and requires no α penalty

- logical to recruit more patients if result is promising but not definitive

(0.7 < P(benefit) < 0.95) - analysis of cumulative data after new data added merely supersedes analysis of previous data

- logical to recruit more patients if result is promising but not definitive

Is α a Good Thing to Control?

- α = type I assertion probability = P(p < 0.05 | H0) typically

- It is not the probability of making an error in acting as if a treatment works

- It is the probability of making an assertion of efficacy (rejecting H0) when any assertion of efficacy would by definition be wrong (i.e., under H0)

- α ⇑ when as # data looks ⇑

Bayesian vs. Frequentist Designs

- Controlling α leads to conservatism when there are multiple data looks

- Bayesian sequential designs: expected sample size at time of stopping study for efficacy/harm/inefficacy ⇓ as # looks ⇑

- Example

- Frequentist group-sequential design vs. Bayesian continuous sequential design

- Decision rules for non-trivial efficacy, inefficacy, similarity

- Average stopping time for frequentist: 273 treated patients

- Bayesian: 113

What Should We Control?

- The probability of being wrong in acting as if a treatment works

- This is one minus the Bayesian posterior probability of efficacy (probability of inefficacy or harm)

- Controlled by the prior distribution (+ data, statistical model, outcome measure, sample size)

- Example: P(HR < 1 | current data, prior) = 0.96

⇒ P(HR ≥ 1) = 0.04 (inefficacy or harm)

The Shocking Truth of Bayes

- It controls the reliability of evidence at the decision point

- Not the pre-study tendency for data extremes under an unknowable assumption

- Simulation examples: bit.ly/bayesOp

Avoid Low-Resolution Outcome Variables

- When an event has multiple severity levels or is recurring:

- Significant lost of power with Y = time to first event

- Need 462 events to estimate a hazard ratio to within a factor of 1.20 (from 0.95 CI)

- Need 384 patients to estimate a difference in means to within 0.2 SD (n = 96 for Xover design)

General Outcome Attributes

- Timing and severity of outcomes

- Handle

- terminal events (death)

- non-terminal severe events (stroke)

- recurrent events (seizures)

- Break the ties; the more levels of Y the better

- Maximum power when no two patients have the same Y

What is a Fundamental Outcome Assessment?

- In a given week or day what is the severity of the worst thing that happened to the patient?

- Expert clinician consensus of outcome ranks

- Spacing of outcome categories irrelevant

- Can code common co-occurring events as worse event

Clinical Readouts

- Can translate an ordinal longitudinal model to obtain a variety of estimates

- time until a condition

- expected time in state

- probability of something bad or worse happening to the pt over time, by treatment

- Bayesian partial proportional odds model can compute the probability that the treatment affects mortality differently than it affects nonfatal outcomes

Examples of Longitudinal Ordinal Outcomes

- 0=alive 1=dead (absorbing state; cease data collection)

- censored at 3w: 000

- death at 2w: 01

- longitudinal binary logistic model OR ≅ HR

Ordinal Example

- 0=OK, 1=seizure, 2=status epilepticus, 3=hospitalized, 4=treatment failure, 5=death

- Treatment failure: escape criterion: e.g.

- Absorbing states: treatment failure, death

- Seizure at 3d, status at 5d, hospitalized at 9d-11d, death at 12d: 001020003335

Statistical Model

- Proportional odds ordinal logistic model with covariate adjustment

- Handles intra-patient correlation with a Markov process + random intercepts

- Extension of binary logistic model

- Generalization of Wilcoxon-Mann-Whitney Two-Sample Test

- No assumption about Y distribution for a given patient type

- Does not use the numeric Y codes

Example Study

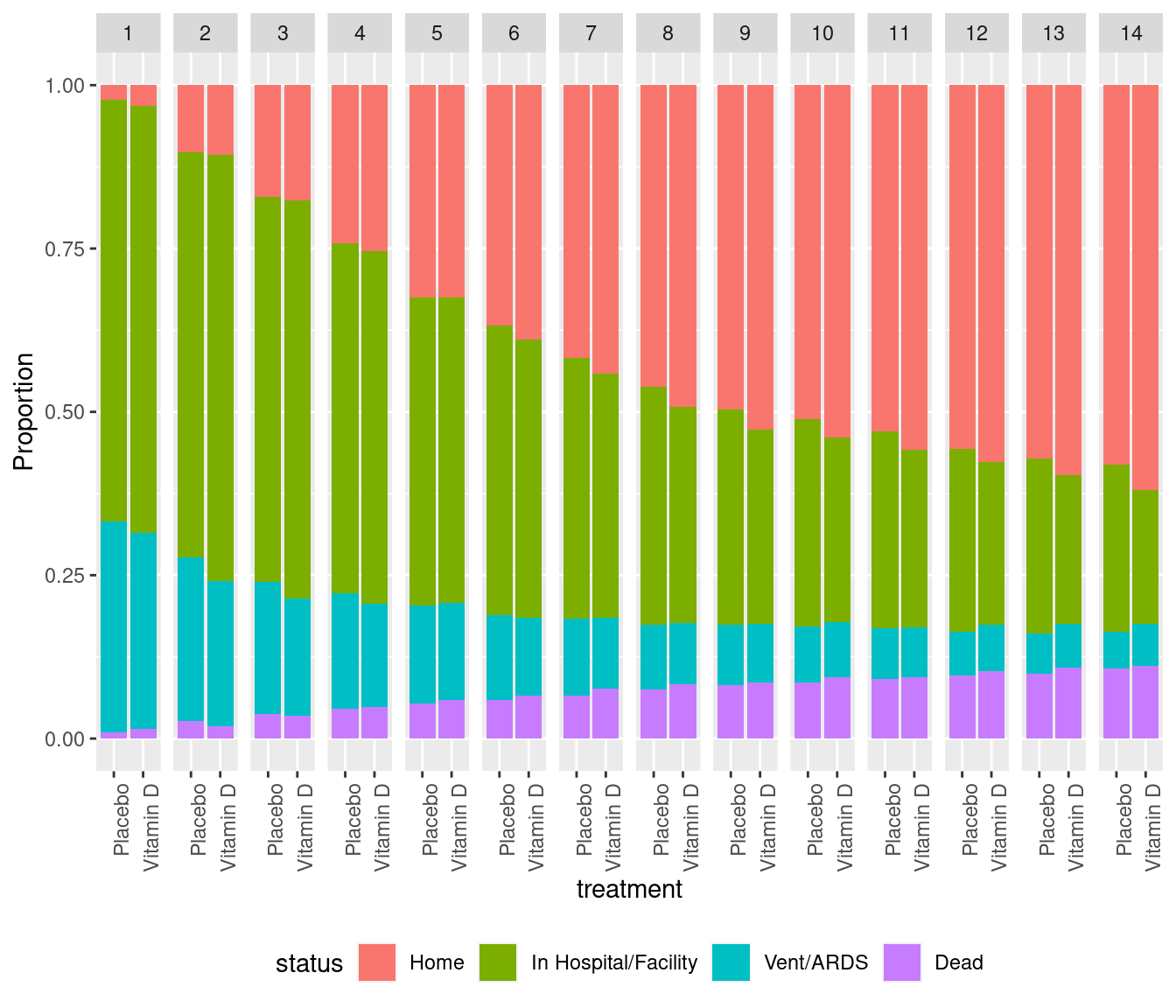

- VIOLET (Petal Network, NEJM 381:2529, 2019)

- Early high-dose vitamin D

- Primary endpoint: mortality (slight evidence for increase with D

- Ordinal endpoint collected each day for 28 consecutive days

VIOLET Day 1 - 14 Ordinal Outcomes

Statistical Power from Using More Raw Data

- Simulation of VIOLET-like studies

| Method | Power |

|---|---|

| Time-to-recovery analysis with Cox model | 0.79 |

| Wilcoxon test of vent/ARDS-free days with death=-1 | 0.32 |

| Longitudinal ordinal model | 0.94 |

Details at hbiostat.org/R/Hmisc/markov/sim.html

General Principles for Choosing Outcome Measures

- Increase power by breaking ties &

- Get close to the raw data

- Relevant to patients

- Respect timing and severity of outcomes

- Can do automatic risk/benefit trade-offs by including safety events in an ordinal outcome scale

Take Home Messages

- Don't take sample sizes seriously; consider sequential designs with unlimited data looks and study extension

- α is not a relevant quantity to "control" or "spend" (unrelated to decision errors)

- Choose high-resolution high-information Y

- Longitudinal ordinal Y is a general and flexible way to capture severity and timing of outcomes

- Always adjust for strong baseline prognostic factors

Quickest Path to Generation of Reliable Knowledge

- When there are two treatment arms

- Use RWD to

- refine the within-patient correlation pattern to model

- estimate variability for (Bayesian) power calculations

- 1:1 randomization with only indirect use of RWD

- Fully sequential trial with multiple decision rules that stop when evidence is strong

- High-resolution longitudinal Y

More Information

hbiostat.org/endpointdatamethods.orghbiostat.org/bbr/alphahbiostat.org/bayes/designfharrell.com/post/nfl